The United States House Committee on Energy and Commerce held a hearing yesterday that saw Facebook Chairman and CEO Mark Zuckerberg, Google CEO Sundar Pichai, and Twitter CEO Jack Dorsey offer testimonies on how each platform plans to stop the spread of extremism and misinformation. The hearing was held in response to the January 6 attack on the U.S. Capitol that left five people dead and more than 140 injured.

In his testament, Zuckerberg outlined specific policies Facebook was implementing to minimize speech not protected under the First Amendment. He also called on Congress to reform intermediary liability protection under section 230 of the United States Communications Decency Act, a piece of legislation that provides platforms such as Facebook with immunity from third-party content; but also allows tech companies to regulate content on their platforms.

Traditionally, startups in the U.S. maintained free range over the innovation of digital communication because intermediary liability protection prevents governments from holding digital platforms legally responsible for illegal third-party content.

In his argument, Zuckerberg highlighted the need for Congress to consider modifying intermediary liability protection across digital platforms for unlawful posts, by basing this protection on a company’s ability to effectively combat the spread of misinformation rather than the company itself.

“Instead of being granted immunity, platforms should be required to demonstrate that they have systems in place for identifying unlawful content and removing it,” said Zuckerberg.

Zuckerberg concluded that digital platforms should not be held legally responsible for unlawful speech that evades detection, due to billions of posts published each day. They should however have compulsory digital systems that address the spread of misinformation and extremism.

At the start of 2020, Facebook implemented several key policies to prevent the spread of misinformation about COVID-19 and the 2020 elections. Zuckerberg outlined the company’s approach to fighting libel in these two areas by highlighting general regulations and strategies and then applying them to each topic.

Facebook’s Approach to Combating Misinformation

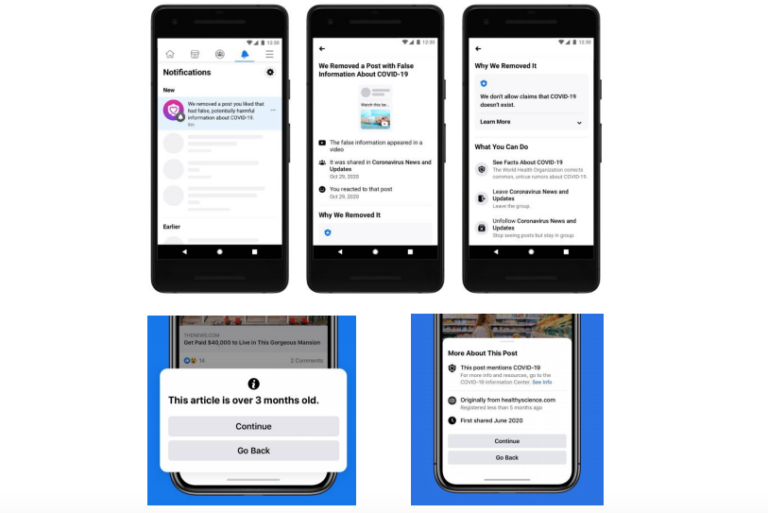

Facebook initiated a fact-checking program that employs 80 independent and certified third-party fact-checkers to reduce the spread of misinformation on Facebook and Instagram. The algorithm has a flagging system that identifies false information and warns users from engaging with and sharing flagged content in groups or on their personal profiles; it also allows for the identification of duplicate posts or articles that should be flagged. When posts are flagged as false, Facebook reduces their distribution.

“The program on average cuts future views by more than 80%,” said Zuckerberg.

The CEO outlined how Facebook works to interrupt the business model behind financially motivated spam, similar to that published during the 2016 election, by minimizing distribution abilities across pages with content that is regularly flagged as false.

Zuckerberg stated the platform can also remove the ability for groups to make a profit and has taken steps towards decreasing targeted ads by offering system updates that reduce the number of posts and “clickbait” that redirects users to substandard websites.

COVID-19

Facebook directed over two billion users to what Zuckerberg called “authoritative information” through publishing a COVID-19 and Voting Information Center.

The company promoted science-based search results including data that provides information on symptoms and travel patterns to public health officials, researchers, and nonprofits to better assist their pandemic response. Users could also access information on why posts they previously engaged with were flagged as false and removed.

Related Articles: Republican Attack on U.S. Capitol | Truth & Discontents in Information Overload

Concerning vaccines, the company has provided $120 million in ad credits to assist health ministries, non-profits, and U.N. agencies in delivering vaccines and health information to billions of people around the world. Facebook is also providing technical support that helps governments and health organizations effectively market up-to-date vaccine information which helps people learn where and when they can get vaccinated.

Election Misinformation

Facebook announced several policies before the 2020 election to uphold America’s democratic process as a result of the 2016 election scandal where Russians were accused of hacking the Clinton Campaign computer systems. “We invested in combatting influence operations on our platforms, and since 2017, we have found and removed over 100 networks of accounts for engaging in coordinated inauthentic behavior,” said Zuckerberg.

Nationally, the company collaborated with election officials to remove false claims about polling stations and flagged over 150 million pieces of content reviewed by Facebook’s independent third-party fact-checkers.

Zuckerberg stated that Facebook established voter suppression policies that prevented “explicit or implicit” fabrications about voting dates, locations, and weaponizing the threat of COVID-19 to prevent people from casting in-person ballots. Facebook also worked to remove announcements on the platform that encouraged users to poll watch and intimidate election officials and voters.

During ballot counting, Facebook updated its users on candidate information by partnering with Reuters and The National Election Pool to provide election results on the platforms Voter Information Center. The company also used its flagging system to warn users about information that was intended to invalidate the election outcome or delegitimize voting systems.

What’s Next

Zuckerberg’s testimony highlights consistencies between government and business approaches to protecting free speech. Facebook hosts 2.8 billion users worldwide with 190 million located in the U.S. Judging by the events at the Capitol on January 6, relinquishing accountability is no longer a viable solution, and policy change is critical if tech hopes to serve its users for good.

Editor’s Note: The opinions expressed here by Impakter.com contributors are their own, not those of Impakter.com. — In the Featured Photo: A tablet depicts the U.S. First Amendment. Featured Photo Credit: Public Knowledge.